Archive

Some Hidden Facts: Stored Procedure and Its Optimization

We create stored procedures in database applications for several reasons and benefits like enhanced performance, security, code maintenance, etc. But with time we may see that these stored procedures are not performing well as expected. There can be many reasons like dependent objects (tables, indexes, execution plans, and data size) changed or stored procedures executing improperly. So we have to be a little bit careful from the beginning of creating stored procedures to execution.

I like to summarize few points about all these things.

A. Stored Procedure Recompilation

When creating a stored procedure, we can specify WITH RECOMPILE option in it. But such stored procedure will never benefit from the cached execution plan as each time it is executed; it forces the cached execution plan to invalidate or flush and create new plan based upon the parameters passed, if any, to it. I do not see any such big benefits of this option. But one may find this useful when such stored procedure will return results or execute only selective part of the stored procedure body based upon supplied input parameters. For example, statements within If-block or Select-Case block based upon input parameters.

But I still feel one should go after the new feature of SQL Server 2008 that helps recompile statement level queries rather than whole stored procedure. But this option will be quite useful as such recompilation is dependent upon input data. Let’s say if you are executing the stored procedure by supplying input parameter like ‘FirstName’ or ‘LastName’ or ‘DateOfBirth’ at a time, then statement-level query recompilation option is better. To use this method, one has to specify that SQL-statement within stored procedure with RECOMPILE query hint.

For example,

CREATE PROC dbo.uspExample

@x_input AS INT,

@y_input AS INT

AS

If @x_input = 1

BEGIN

SELECT x, y, z

FROM dbo.tblXYZ

WHERE xColumn >= @x_input

OPTION(RECOMPILE); —See here the query hint

End

If @y_input = 2

BEGIN

SELECT x, y, z

FROM dbo.tblXYZ

WHERE yColumn >= @y_input

END

GO

Another tool that we can use is sp_recompile system stored procedure. This procedure forces recompile of user defined stored procedure next time it is run.

Let’s look at its syntax first.

Sp_recompile ‘@somedependent_object’;

Here, ‘@somedependent_object’ can be either table or view or another stored procedure or even trigger. If this ‘@somedependent_object’ is table name, then sql server will compile all the stored procedures that references this table. If this object name is some stored procedure, then this stored procedure will recompile next time it is run.

This option is good when the table properties are changed, and this table is in use of many other stored procedures. Instead of recompiling each and every such depending stored procedure, a simple sp_recompile will do enough, and with no server restart!

But there are many other scenarios when stored procedure recompilation can happen automatically. If server is running out of memory, the execution cache will get flushed. If the stored procedure has some session specific SET modifiers (like LOCK TIMEOUT, DATEFIRST, ANSI_WARNINGS, etc), then also stored procedure can recompile.

Situations like when we mix DDL and DML statements together inside stored procedure may also cause stored procedure recompilation. For example, most of us create temporary tables inside stored procedure. Then, do DML operations based upon values in these temporary tables. Even this type of stored procedure is forced to recompile to create new plan according to such new temporary tables. For this type of stored procedure, one should opt for statement-level recompilation by using RECOMPILE hint in that DML statement following DDL statements.

But what is alternative to temporary tables here? We can use table variables instead! Or, if we cannot do without temporary tables, then we should write such DDL statements in the beginning of stored procedure body itself so that multiple recompilations are not happening for a single call to this stored procedure.

So stored procedure recompilation can be harmful and useful both depending upon situations. One has to think twice whether recompilation is required or not.

B. Stored Procedure Name

We should always create a stored procedure with a full naming convention. Schema name should always prefix the stored procedure name. Schema name will help sql server name resolution easily when it is called. This helps sql server in which schema to query the stored procedure.

C. Table Indexes

Tables should have proper indexes and compiled time to time as indexes may be weird off after some time due to huge data insertion or deletion.

This is all about some useful facts about stored procedure.

T-SQL and CLR Code in .Net 3.5 and SQL Server 2008

Due to CLR (Common Language Runtime) integration with SQL Server 2005/2008, programmers can now multiply the power of database programming using T-SQL and advanced programming languages like C# and VB.Net. We can write stored procedures, user-defined functions, triggers, aggregates and user-defined types in managed code. But we have to know what is best at what situation. T-SQL is still the best way to interact with database server and data. But what when T-SQL has to access files, environment variables or registry? Then we use extended stored procedures to perform such operations. Since extended stored procedures are not managed code, it cannot be considered a good option. But since SQLCLR objects are managed code and run within SQL Server (in-process), it can be a good option.

T-SQL code can run only at the server end, but SQLCLR code can run at both the client and server ends. And hence SQLCLR code can perform CPU intensive tasks at both the ends. This is one of the most compelling reasons to choose SQLCLR object over T-SQL code. Now a day, even client PCs are very powerful. SQLCLR objects can be used to perform complex string operations, encryption-decryption, array operations, accessing external resources like files and registry, etc. Apart from all these, we should not forget the benefits of .Net code.

Let’s take a simple example to calculate factorial of a number in both T-SQL and SQLCLR.

CREATE FUNCTION dbo.SqlFactorial(@factno decimal(38, 0))

RETURNS decimal(38, 0)

AS

BEGIN

DECLARE @temp decimal(38, 0)

IF (@factno <= 1)

SELECT @temp = 1

ELSE

SELECT @temp = @factno * dbo.SqlFactorial(@factno – 1)

RETURN @temp

END

GO

This will create one user-defined function called dbo.SqlFactorial.

Now create SQLCLR user-defined function in .Net 3.5.

Steps:

Create one project: ->Visual C# -> Database – > SQL Server Project.

A database reference will be asked for where to create the object. The wizard will guide you; it’s simple.

Right click on the project name, select Add -> User-Defined Function… Name it CLRFactorial.

Write a factorial method as:

using System;

using System.Data;

using System.Data.SqlClient;

using System.Data.SqlTypes;

using Microsoft.SqlServer.Server;

public partial class UserDefinedFunctions

{

[Microsoft.SqlServer.Server.SqlFunction]

public static Double CLRFactorial(Double factno)

{

//

if (factno <= 1)

factno =1;

else

{

factno = factno * CLRFactorial(factno – 1);

}

return factno;

}

};

To test it, build the project. Modify the Test Scripts – > Test.sql file in the solution explorer of the same project to call this method: Select dbo.CLRFactorial(21);

Run the project in debug or non-debug mode to see its result in the output window.

Now it’s time to deploy this assembly to SQL Server database whose reference we added when following SQL Server Project wizard. Choose Build – > Deploy Solution. This will add CLRFactorial assembly in the database node at: -> Programmability -> Assemblies -> CLRFactorial. Then, enable CLR in SQL Server by running following command.

EXEC sp_configure ‘clr_enabled’, 1;

RECONFIGURE;

By this time, we have two user-defined functions to calculate factorial of a number: SqlFactorial and CLRFactorial. Test both the functions in Query Analyzer for numbers 5, 10, 25, 30, 32 and so on. Now run.

SELECT dbo.SqlFactorial(33);

A lovely message comes in result as:

Maximum stored procedure, function, trigger, or view nesting level exceeded (limit 32).

But we do not get such error message with SQLCLR function. We get result 8.68331761881189E+36 in exponential form.

See the benefit of SQLCLR code! But one should also know the PERMISSION_SET of such assembly in SQL Server before writing magical code in .Net.

MERGE Statement in SQL Server 2008

While working with table records from more than one table, there is often need of synchronizing data across many tables. For this purpose, we generally write UPDATE, INSERT and DELETE commands one after another based upon certain conditions. Oracle has already MERGE command to perform inserts and updates into a single table in a single command since Oracle9i. But with SQL Server 2008, there is MERGE command to do updates, inserts and deletes in a single statement. MERGE statement will take the source data from a table or view or query, and then perform DML operations (insert, update, delete) if the right conditions match.

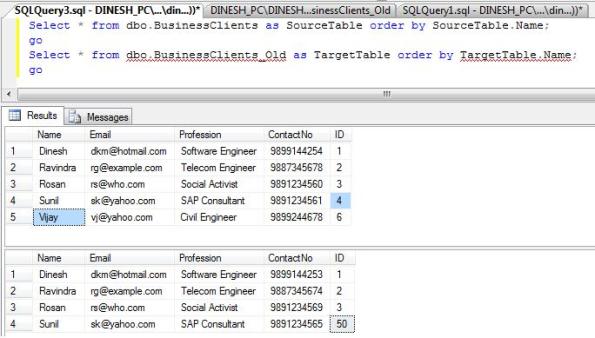

Let’s see from an example where we have two tables: dbo.BusinessClients_Old as ‘Target’ table and dbo.BusinessClients as ‘Source’ table. We are going to synchronize the records between these two tables. We will update ‘ContactNo’ in Target table, insert new record into Target table that is only in Source table and delete unmatched record in Target table. The result will be synchronized records between both the tables.

Fig 1: Initial data found in both the tables

Fig 1: Initial data found in both the tables

Now, issue a MERGE command as:

MERGE dbo.BusinessClients_Old as tblTarget

USING dbo.BusinessClients as tblSource

ON (tblTarget.ID = tblSource.ID)

WHEN MATCHED THEN

UPDATE SET tblTarget.ContactNo = tblSource.ContactNo

WHEN NOT MATCHED BY SOURCE THEN

DELETE

WHEN NOT MATCHED THEN

INSERT (Name, Email, Profession, ContactNo, ID)

VALUES (tblSource.Name, tblSource.Email, tblSource.Profession, tblSource.ContactNo, tblSource.ID)

OUTPUT $action, inserted.id as InsertedId, inserted.name AS InsertedName, inserted.contactno AS InsertedContactNo,

deleted.id AS DeletedId, deleted.name AS DeletedName;

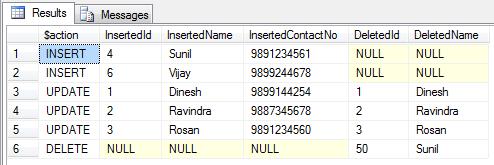

The result is shown in figure below.  Fig 2: Result of MERGE command that is synchronized records now

Fig 2: Result of MERGE command that is synchronized records now

See the updated ContactNo and inserted new record in the Target table: dbo.BusinessClients_Old.  Fig 3: Synchronize records in Target table: dbo.BusinessClients_Old

Fig 3: Synchronize records in Target table: dbo.BusinessClients_Old

Points to be noted:

MERGE statement must end with a semicolon. The OUTPUT clause is optional here which is used to show recently inserted or updated or deleted records in the same session by INSERT or UPDATE or DELETE statement. This OUTPUT clause is there since SQL Server 2005. We may be aware of ‘inserted’ and ‘deleted’ tables if we have made our hands dirty by writing triggers. ‘inserted’ and ‘deleted’ are temporary tables created automatically by SQL Server whenever there is row modification in a table.

We can remove the OUTPUT clause, but it is there to see what all modifications happen when issuing MERGE command. ‘$action’ is a nvarchar(10) column that shows which action was performed by the MERGE command: INSERT or UPDATE or DELETE based upon the condition specified. Better to write ‘$action’ always in lower case as its case is dependent upon database collation for case sensitiveness. For WHEN clause, we have to be little careful. I suggest to refer to SQL Server Books Online at http://msdn.microsoft.com/en-us/library/ms130214.aspx for more details on WHEN clause.

That’s all.

Creating and Using Table-Valued Parameter in C# and T-SQL

With the growing complexity of database applications, we often come across a problem when we need to send multiple rows to database table(s). When SqlBulkCopy class was introduced in .Net 2.0, it was a great relief for all. But if the same requirement arises at back-end processing and mostly in Stored Procedures, programmers often feel something lacking in stored procedure parameters. Workaround solutions are temporary tables, cursors, etc. to overcome.

SQL Server 2008 has a new feature called Table-Valued parameter where we can pass a table valued parameter to stored procedure just like we pass Entity or DTO class object as parameter to Data Access Layer in C# or VB.Net.

Now we can directly pass table like object instead of processing row by row ranging from few to many rows. We used SqlBulkCopy class to post many rows, but still it was not able to pass a table as a parameter.

In this example, we will see how to create a table-value type object, pass it to a stored procedure as a parameter in Sql Server 2008. Also, how to use it programmatically in C# 3.5 as well.

–Step 1: Create a User-defined Table Type

USE AdventureWorks

GO

CREATE TYPE PublisherTableType AS TABLE

(

PublisherID bigint,

PublisherName varchar(50),

PublisherAddress varchar(50),

PublisherContactNo varchar(15)

);

GO

–Step 2: Create a destination table

CREATE TABLE [dbo].[BookPublisher](

[PublisherId] [bigint] NULL,

[PublisherName] [varchar](50) NULL,

[PublisherAddress] [varchar](50) NULL,

[PublisherContactNo] [varchar](15) NULL

) ON [PRIMARY]

–Step 3: Create a procedure to receive data for the table-valued parameter

CREATE PROCEDURE usp_InsertPublisherInfo

@PublisherInfo dbo.PublisherTableType READONLY

AS

SET NOCOUNT ON

INSERT INTO dbo.BookPublisher(PublisherID,PublisherName,PublisherAddress,PublisherContactNo)

SELECT * FROM @PublisherInfo;

GO

–Step 4: Declare a variable of type PublisherTableType

Declare @PubInfo dbo.PublisherTableType;

Insert into @PubInfo

values (1, ‘BPB’, ‘Delhi, India’, ‘1234567890’ ),

(2, ‘PHI’, ‘Mumbai, India’, ‘7892345678’),

(3, ‘Apress’, ‘Chennai, India’, ‘467890034’),

(4, ‘Wrox’, ‘Kolkata, India’, ‘7463643847’);

–Step 5: Execute SP by passing table value type

Exec usp_InsertPublisherInfo @PublisherInfo = @PubInfo;

–Step 6: Select the newly inserted records

Select * from dbo.BookPublisher;

GO

I think steps mentioned in the above example are quite clear. One can see this newly created table value type object under “User-Defined Table Types”. Now, let’s look into another example to exploit the newly created PublisherTableType.

private DataTable PublisherList()

{

//

DataTable TblPub = new DataTable();

TblPub.Columns.Add(“PublisherId”, typeof(Int64));

TblPub.Columns.Add(“PublisherName”, typeof(String));

TblPub.Columns.Add(“PublisherAddress”, typeof(String));

TblPub.Columns.Add(“PublisherContactNo”, typeof(String));

DataRow PubRow;

//Fill the collection values

for (Int16 RwCnt = 0; RwCnt < 10; RwCnt++)

{

//

PubRow = TblPub.NewRow();

PubRow[0] = 100 + RwCnt;

PubRow[1] = “BookPub” + ” – ” + RwCnt.ToString();

PubRow[2] = “PubAddress” + ” – ” + RwCnt.ToString();

PubRow[3] = “0091” + ” – ” + “4567” + RwCnt.ToString();

TblPub.Rows.Add(PubRow);

}

return TblPub;

}

private void SaveDataUsingTableValueType(DataTable PubList)

{

//

String ConString = @”Data Source=DINESH_PC\DINESHPC;Initial Catalog=AdventureWorks;Integrated Security=True”;

SqlConnection SqlCon = new SqlConnection(ConString);

String SpName = “usp_InsertPublisherInfo”;

SqlCommand SqlCmd = new SqlCommand(SpName, SqlCon);

SqlCmd.CommandType = System.Data.CommandType.StoredProcedure;

//Parameter type is SqlDbType.Structured for table valued type parameter

SqlParameter SqlParam = SqlCmd.Parameters.Add(“@PublisherInfo”, System.Data.SqlDbType.Structured);

SqlParam.Value = PubList;

SqlCon.Open();

//Execute the command

SqlCmd.ExecuteNonQuery();

SqlCon.Close();

}

Next, call the save method as: SaveDataUsingTableValueType(PublisherList());

This way we see how this new feature is a bliss.

We have to remember some points with table value type. The READONLY qualifier after table value parameter in stored procedure is required here to mark parameter object as a pointer to the original table value. This way the original content passed cannot be modified inside the stored procedure. Once a table type object is created, it cannot be altered other than dropping and recreating it again with modified definition. Performance wise it is well suited for table rows less than 1000.

Have a nice coding day!CodeProject

New Feature In SQL Server 2008: Row Constructor

I think we all are familiar with INSERT statement in Sql Server 7.0/2000/2005, and may be in Oracle, MySQL, etc. In Sql Server, we have come across INSERT INTO TableName (columns) VALUES (literalValues), or INSERT INTO TableName (columns) SELECT columns FROM TableNameX statement many times. The first type of statement is used when we have to insert one record at a time, while the second statement helps us to insert one or more records depending upon the number of rows returned by SELECT statement. Some even use UNION clause to select multiple rows.

We also know how painful it was to insert multiple records using INSERT INTO TableName (columns) VALUES (literalValues) from DataAccessLayer although different programmers have different approaches to it.

But Sql Server 2008 has now come with a rescue for all programmers with a new feature called “Row Constructor“. Yes, a Class like initialization constructor!

Let’s see it by example.

Example 1:

–Create a new table [BusinessClients] with row values from the list [WHERE Age <= 30]

SELECT Name, Email, Profession, ContactNo

INTO dbo.BusinessClients

FROM

(

VALUES(‘Dinesh’, ‘dkm@hotmail.com’,’Software Engineer’, ‘9899144254’, 28),

(‘Ravindra’, ‘rg@example.com’, ‘Telecom Engineer’, ‘9887345678’, 28),

(‘Rosan’, ‘rs@who.com’, ‘Social Activist’, ‘9891234560’, 30),

(‘Hari’, ‘ha@rnac.com’, ‘Construction Engineer’, ‘9897654890’, 32)

) as Employee(Name, Email, Profession, ContactNo, Age)

WHERE Age <= 30;

Go

–Fetch the inserted records from a newly created table

Select * from dbo.BusinessClients;

Go

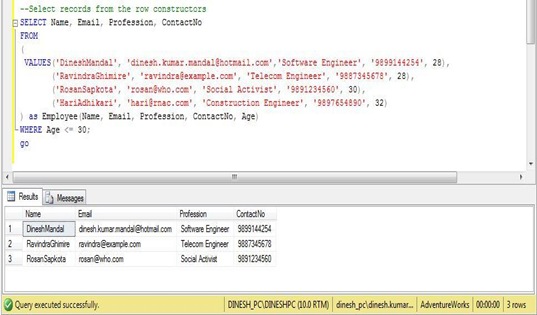

Example 2:

–Select records from the row constructors

SELECT Name, Email, Profession, ContactNo

FROM

(

VALUES(‘DineshMandal’, ‘dinesh.kumar.mandal@hotmail.com’,’Software Engineer’, ‘9899144254’, 28),

(‘RavindraGhimire’, ‘ravindra@example.com’, ‘Telecom Engineer’, ‘9887345678’, 28),

(‘RosanSapkota’, ‘rosan@who.com’, ‘Social Activist’, ‘9891234560’, 30),

(‘HariAdhikari’, ‘hari@rnac.com’, ‘Construction Engineer’, ‘9897654890’, 32)

) as EmployeeDetails(Name, Email, Profession, ContactNo, Age)

WHERE Age <= 30;

Go

See the VALUES clause in parenthesis and separated by comma. This is what we call “Row Constructor”. In example 2, see the derived table name EmployeeDetails and its column names inside the parenthesis. And of course the list of column names we like to select out of VALUES parameter values. These are few things we should take care when using row constructor.

I think this feature is really praiseworthy.

I think we all are familiar with INSERT statement in Sql Server 7.0/2000/2005, and may be in Oracle, MySQL, etc. In Sql Server, we have come across INSERT INTO TableName (columns) VALUES (literalValues), or INSERT INTO TableName (columns) SELECT columns FROM TableNameX many times. The first type of statement is used when we have to insert one record at a time, while the second statement helps us to insert one or more records depending upon the number of rows returned by SELECT statement. Some even used UNION clause to select multiple rows.

We also know how painful it was to insert multiple records using INSERT INTO TableName (columns) VALUES(literalValues) from DataAccessLayer although different programmers have different approaches to it.

But Sql Server 2008 has now come with a rescue for all programmers with a new feature called “Row Constructor”. Yes, a Class like initialization constructor!

Let’s see it by example.

Example 1:

–Create a new table [BusinessClients] with row values from the list [WHERE Age <= 30]

SELECT Name, Email, Profession, ContactNo

Into dbo.BusinessClients

FROM

(

VALUES(‘Dinesh’, ‘dkm@hotmail.com’,‘Software Engineer’, ‘9899144254’, 28),

(‘Ravindra’, ‘rg@example.com’, ‘Telecom Engineer’, ‘9887345678’, 28),

(‘Rosan’, ‘rs@who.com’, ‘Social Activist’, ‘9891234560’, 30),

(‘Hari’, ‘ha@rnac.com’, ‘Construction Engineer’, ‘9897654890’, 32)

) as Employee(Name, Email, Profession, ContactNo, Age)

WHERE Age <= 30;

Go

–Fetch the inserted records from a newly created table

Select * from dbo.BusinessClients;

Go

Example 2:

–Select records from the row constructors

SELECT Name, Email, Profession, ContactNo

FROM

(

VALUES(‘DineshMandal’, ‘dinesh.kumar.mandal@hotmail.com’,‘Software Engineer’, ‘9899144254’, 28),

(‘RavindraGhimire’, ‘ravindra@example.com’, ‘Telecom Engineer’, ‘9887345678’, 28),

(‘RosanSapkota’, ‘rosan@who.com’, ‘Social Activist’, ‘9891234560’, 30),

(‘HariAdhikari’, ‘hari@rnac.com’, ‘Construction Engineer’, ‘9897654890’, 32)

) as EmployeeDetails(Name, Email, Profession, ContactNo, Age)

WHERE Age <= 30;

Go

See the VALUES clause in parenthesis and seperated by comma. This is what we call “Row Constructor”. In example 2, see the derived table name EmployeeDetails and its column names inside the parenthesis. And of course the list of column names we like to select out of VALUES parameter values. These are few things we should take care when using row constructor.

Result of Example 2:

I think this feature is really praiseworthy.

Feedback